I’ve finally got a working config for Apache with PHP-FPM, per-vhost pools, UIDs and chroots. There seem to be a lot of tutorials around the net to help set up FPM with nginx, but very little with Apache. The following instructions are for FreeBSD, but they would be easy to adapt to most any OS. This document is still evolving, but I wanted to get it out to people in FreeNode #php-fpm who have been asking for help.

Why am I setting up PHP like this??

I have been using Apache with mod_php for years, and it works, but it has a number of problems, especially in a virtual hosting situation. All PHP scripts will run with the webserver’s UID, which is crummy for security. Users’ scripts can see the whole file system. When Apache services a non-PHP requests, such as an image or style sheet, it still has to load the whole PHP interpreter, using a bunch of memory.

This setup addresses each of these issues, hopefully making PHP sites more secure, and less memory hungry. Instead of including the mod_php interpreter in Apache uses the “FastCGI” protocol to parcel requests to a long-running “PHP-FPM” server. Each website I’m hosting is has its own configuration. Each runs under its own UID. Each is chroot-ed in the owner’s home directory. Only PHP requests are handled my PHP-FPM. Everything else stays in Apache.

Let’s get to the details

We’re going to install and configure a bunch of stuff software, and then set up a chroot environment.

Install Apache 2.2

cd /usr/ports/www/apache22

make install clean

Be sure to enable suexec in the Apache options dialog.

Enable Apache

Add apache22_enable=”YES” to /etc/rc.conf and start it up

service apache22 start

Install PHP-FPM

cd /usr/ports/lang/php5

make install clean

- Do NOT build the Apache module.

- DO build the FPM version

- Building the CGI and CLI versions is fine as well

- I add the mailhead patch too

Install the PHP extensions

This is a bit of a FreeBSD-ism, that you won’t have to do on most other OSs. FreeBSD strips the PHP port down to a bare minimum, and moves all the plugins – including the default ones – into their own ports. The php5-extensions meta-port collects them all into one place.

cd /usr/ports/lang/php5-extensions

make install clean

Add php_fpm_enable=”YES” to /etc/rc.conf and start it up

service php-fpm start

Install fastcgi

cd /usr/ports/www/mod_fastcgi/

make install clean

Edit httpd.conf, inserting:

LoadModule fastcgi_module libexec/apache22/mod_fastcgi.so

LoadModule suexec_module libexec/apache22/mod_suexec.so

and setting:

ServerAdmin webaster@example.com

ServerName server_ip_address_or_working_hostname

And un-comment the “Include” directives that make sense for me.

Now append:

NameVirtualHost *:80

Include etc/apache22/Includes/*.conf

and comment out this block:

#<Directory />

# AllowOverride None

# Order deny,allow

# Deny from all

#</Directory>

Yes, that super-sucks. Does anyone know of a workaround?

I like to keep each vhosts configuration in its own file, in a “vhosts/” directory, so I append:

Include etc/apache22/vhosts/*.conf

and

mkdir vhosts disabled-vhosts

You can guess what the second directory if for. Now restart and see if that works.

service apache22 restart

You may get a warning like “NameVirtualHost *:80 has no VirtualHosts” because we haven’t added any yet. Nothing to worry about.

Next create a Includes/php-fpm.conf for global fpm configs that will apply to every site. Mine looks like:

FastCgiIpcDir /usr/local/etc/php-fpm/

FastCgiConfig -autoUpdate -singleThreshold 100 -killInterval 300 -idle-timeout 240 -maxClassProcesses 1 -pass-header HTTP_AUTHORIZATION

FastCgiWrapper /usr/local/sbin/suexec

<FilesMatch \.php$>

SetHandler php5-fcgi

</FilesMatch>

Action php5-fcgi /fcgi-bin

<Directory /usr/local/sbin>

Options ExecCGI FollowSymLinks

SetHandler fastcgi-script

Order allow,deny

Allow from all

</Directory>

See if Apache likes that:

service apache22 restart

Configure FPM

Now FPM needs some configuration. Create a directory to store per-vhost fpm configs:

mkdir /usr/local/etc/fpm.d

Then edit the global php-fpm.conf, un-commenting:

include=etc/fpm.d/*.conf

switching the listen statement from a tcp port to:

listen = /tmp/php-fpm.sock

and changing the pm to:

pm = ondemand

There are a couple different types of process manager (pm). On demand will prefork zero (0) processes. They will only forked when needed. I chose this for lots of small sites. You may want a model that suits your setup better.

Now lets create a vhost. Given a site named “example.com” owned by user “luser”, here’s my template:

<VirtualHost *:80>

ServerName www.example.com

DocumentRoot /home/luser/example.com/htdocs

SuexecUserGroup luser luser

ServerAlias example.com

ErrorLog /home/luser/example.com/logs/example.com.error_log

CustomLog /home/luser/example.com/logs/example.com.access_log combined

<Directory /home/luser/example.com/htdocs">

Order allow,deny

Allow from all

Options +Indexes +FollowSymLinks +ExecCGI +Includes +MultiViews

AllowOverride All

</Directory>

FastCgiExternalServer /tmp/fpm-example.com -socket /tmp/php-fpm-example.com.sock -user luser -group luser

Alias /fcgi-bin /tmp/fpm-example.com

<Location /fcgi-bin>

Options +ExecCGI

Order allow,deny

Allow from all

</Location>

<LocationMatch "/(ping|fpm-status)">

SetHandler php5-fcgi-virt

Action php5-fcgi-virt /fcgi-bin virtual

</LocationMatch>

</VirtualHost>

And create a complimentary the FPM pool config:

[example.com]

user = luser

group = luser

listen = /tmp/php-fpm-example.com.sock

chroot = /home/luser

pm = ondemand

pm.max_children = 50

pm.status_path = /fpm-status

php_admin_value[doc_root] = /example.com/htdocs

php_admin_value[cgi.fix_pathinfo] = 0

php_admin_value[sendmail_path] = /bin/mini_sendmail -t -fwebmaster@internal.org

Living in a chroot

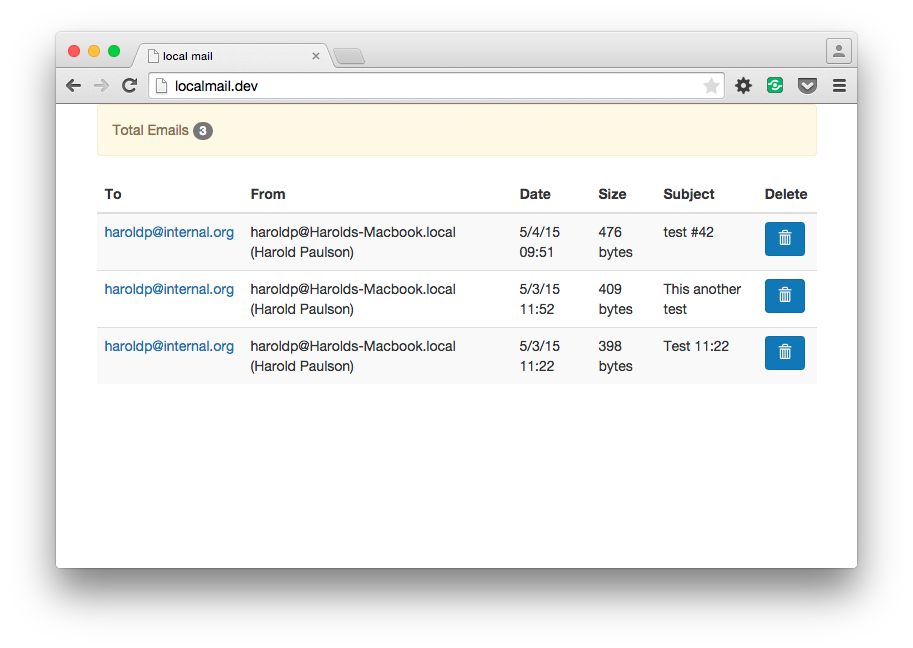

So PHP’s mail() function invokes your system’s sendmail binary, usually /usr/sbin/sendmail. From within a chroot, that won’t be available. However, there is the further problem that even if you copied sendmail and any libraries it needs into the chroot, it will want to write files to /var/spool, and again, that won’t be available. We need a work around. Install mini_sendmail. It is a sendmail workalike that you can easily copy into a chroot, and instead of writing to /var/spool, it will make an SMTP connection to localhost. Be sure to set the -f envelope-sender in your fpm pool config, or mini_sendmail will use your username out of the environment when PHP or mini_sendmail was compiled, at the machine name. PHP scripts can still override it using the mail() functions additional_parameters argument.

cd /usr/ports/mail/mini_sendmail

make install clean

Create a chroot environment for the vhost:

mkdir ~luser/tmp ~luser/bin

ln /tmp/mysql.sock ~luser/tmp/

cp /rescue/sh ~luser/bin/sh

ln /usr/local/bin/mini_sendmail ~luser/bin/mini_sendmail

PHP will need a /tmp directory. If you are using MySQL, you will need to hardlink your mysql.sock into there or use TCP connections. If you link the socket, you need to redo that EVERY time you restart MySQL. (I should include my rc script here). Hard link mini_sendmail into the chroot. And finally, PHP needs a shell to invoke sendmail. Yes this sucks. You can copy /bin/sh in, but chances are, it needs libraries that aren’t in the chroot. I could copy those too, but I just copied the crunched binary from FreeBSD’s /rescue dir. Yes, this sucks even more because it includes stuff I don’t want or need, and I need a better solution. TODO: crunch my own sh with a couple other useful items. Maybe use busybox for this?

Set the tmp dir in php.ini to

upload_tmp_dir = /tmp

Update #1

I had a problem with a number of server variables not getting properly translated for use within the chroot, so I added a php prepend directive to the php-fpm conf files like:

php_admin_value[auto_prepend_file] = /bin/phpfix

And then linked this file into each chroot’s ~/bin/ directory:

$_SERVER['DOCUMENT_ROOT'] = ini_get('doc_root');

$_SERVER['PATH_TRANSLATED'] = str_replace($_SERVER['HOME'], '', $_SERVER['PATH_TRANSLATED']);

$_SERVER['SCRIPT_FILENAME'] = str_replace($_SERVER['HOME'], '', $_SERVER['SCRIPT_FILENAME']);

Update #2

PHP’s streams tools (like file_get_contents()) rely on openssl for HTTPS URLs, and many other plugins (like SOAP) in turn rely on those streams. Curl seems to function just fine in a chroot, but PHP’s openssl streams require certain device nodes to function. You will have to mount /dev inside your chroot in order to use them. More on this when I get a good system in place.